Multi-Layered Backend Architecture: From Express Chaos to Enterprise Scalability

Master the five-layer architecture pattern that separates routing, business logic, and data access into bulletproof, testable layers — the blueprint behind every enterprise Node.js application.

Every backend developer has lived this nightmare: you start building an Express app, and six months later, MongoDB queries, HTTP headers, and validation logic are all tangled together in a single function. You change a database index, and suddenly a user-facing API breaks. The team can't figure out why because nobody knows where to look.

Express.js is deliberately unopinionated. It gives you routing and middleware, then steps back completely. That flexibility is magical for prototypes, but in production, it becomes a liability. Without enforced architectural boundaries, applications degrade into tightly coupled monoliths that are slow to extend, risky to refactor, and expensive to test.

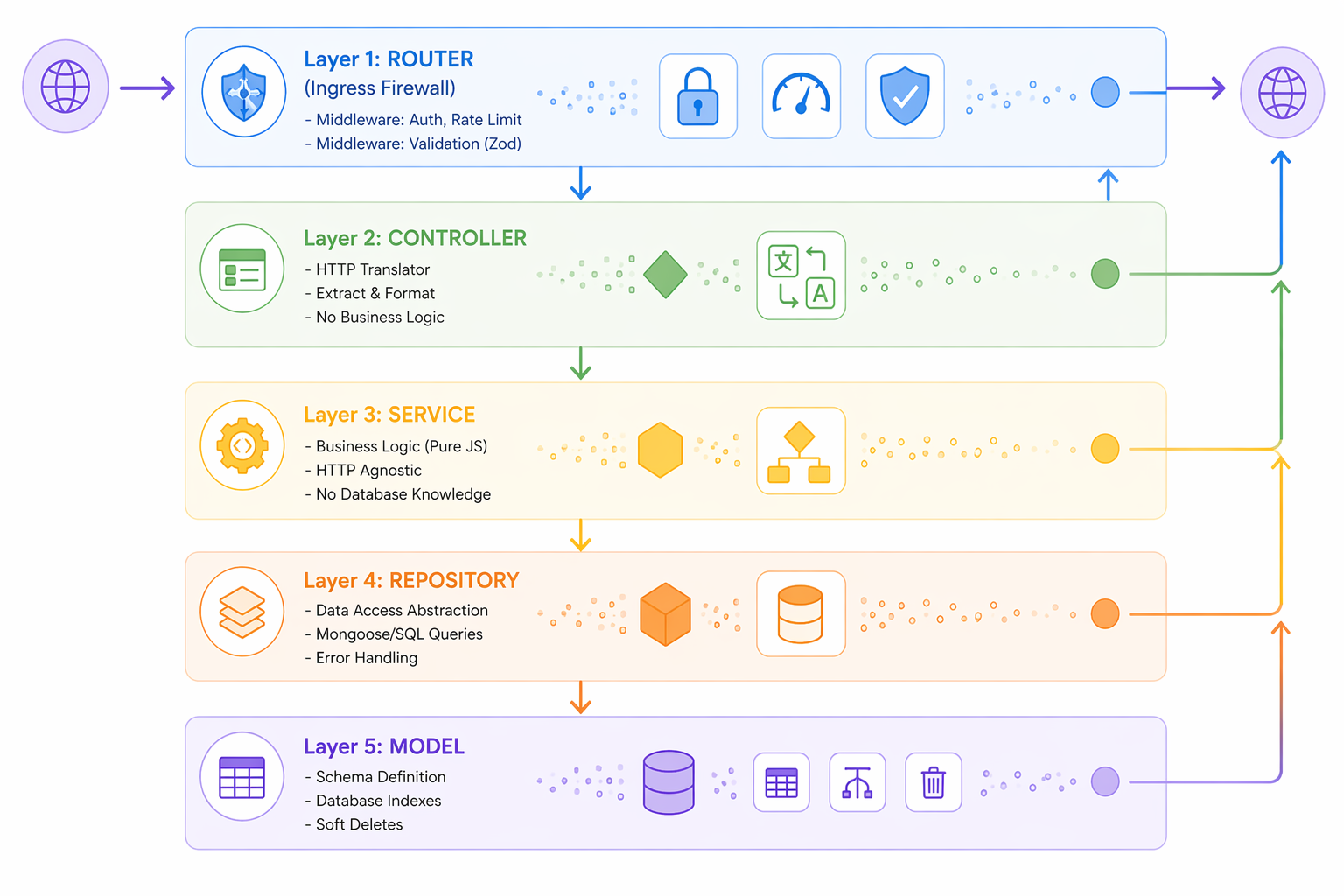

The solution isn't a new framework — it's a time-tested blueprint: multi-layered vertical architecture. By isolating concerns into five distinct layers, you transform Express from a loose collection of endpoints into a predictable, scalable machine. Every layer has exactly one job, and it's entirely blind to the jobs of the layers above and below it. This isn't theory; it's how Netflix, Stripe, and every major tech company structure their Node.js backends.

In this article, we'll trace a single HTTP request through all five layers, watching the data transform as it crosses each boundary. By the end, you'll understand not just how to build layered architecture, but why every enterprise system requires it.

Watch: Complete Video Walkthrough

Watch on YouTube — A detailed walkthrough of the five-layer pattern with live code examples and architecture explanations.

The Problem: Express Without Boundaries

Consider a typical Express endpoint that updates a user's profile:

js// ❌ Anti-pattern: Everything in one place app.patch('/users/:id', authenticateUser, async (req, res) => { try { const user = await User.findById(req.params.id); if (!user) return res.status(404).json({ error: 'User not found' }); // Validation mixed with business logic if (req.body.bio && req.body.bio.length > 500) { return res.status(400).json({ error: 'Bio too long' }); } // Database operation inline user.bio = req.body.bio; user.avatar = req.body.avatar; await user.save(); // HTTP response formatting res.json({ success: true, data: { id: user._id, bio: user.bio, avatar: user.avatar } }); } catch (err) { res.status(500).json({ error: 'Server error' }); } });

This looks innocent, but it creates three catastrophic problems:

- Tightly Coupled: The controller knows about MongoDB, the database knows about HTTP, and business logic is scattered across both.

- Not Reusable: Want to update a profile from a background task? You can't use this logic — it's baked into Express.

- Impossible to Test: To unit-test profile updates, you need a real MongoDB instance. You can't inject a test repository.

- Lack of Enforcement: Without boundaries, the next developer adds database queries to the controller, creating more spaghetti.

The problem compounds at scale. When a team has 20 developers, each writing code differently, the codebase becomes unpredictable and fragile. Technical debt explodes.

The Solution: Five-Layer Architecture

Enterprise applications enforce strict vertical boundaries:

Each layer has ONE job. Each layer is BLIND to the layers above and below it. This isn't about code organization — it's about enforcing architectural contracts.

Layer 1: The Ingress Firewall (Router + Middleware)

Think of your routes as a firewall. Before a request touches business logic, it must survive a gauntlet of middleware checks.

js// router.js const express = require('express'); const router = express.Router(); const { authenticateJWT } = require('./middleware/auth'); const { rateLimitPerEndpoint } = require('./middleware/rateLimit'); const { validateZodSchema } = require('./middleware/validation'); const userController = require('./controllers/userController'); const { updateProfileSchema } = require('./schemas/userSchemas'); // Standard route definition acting as an ingress firewall router.patch( '/users/:id', authenticateJWT, // Step 1: Verify JWT token rateLimitPerEndpoint('updateProfile', 5, '15m'), // Step 2: Rate limiting validateZodSchema(updateProfileSchema), // Step 3: Validate payload userController.updateProfile // Step 4: Controller (data is clean) ); module.exports = router;

Why This Matters

By the time a request reaches the controller, it has been vetted by:

- Authentication Middleware: Token verified, user attached to request

- Rate Limiting Middleware: Abuse attempts rejected with 429 status

- Validation Middleware: Schema verified with Zod

The controller and service layers never write defensive code. They assume the data is already clean. This reduces complexity exponentially.

Layer 2: The Controller (HTTP Translator)

The controller's ONLY job is to translate HTTP into function calls.

js// controllers/userController.js const userService = require('../services/userService'); const { successResponse, errorResponse } = require('../utils/responseFormatter'); const updateProfile = async (req, res, next) => { try { // Extract clean data from request const userId = req.params.id; const updateData = req.body; // Already validated by Zod middleware const requestingUser = req.user; // Attached by auth middleware // Call service (pure business logic) const updatedUser = await userService.updateProfile(userId, updateData); // Format response res.status(200).json(successResponse(updatedUser)); } catch (err) { // Forward error to centralized error handler next(err); } }; module.exports = { updateProfile };

Cardinal Rules for Controllers

- Never import database models: Controllers have zero awareness that MongoDB exists

- No business logic: Controllers don't validate business rules (service does that)

- No data transformation: Only extract and format (formatting is controller's domain)

- Always use try/catch: Forward errors to middleware via

next(err)

This makes controllers easily testable and locked to HTTP concerns only.

Layer 3: The Service (Business Logic Engine)

Here's where the actual work happens. Services are pure JavaScript, completely divorced from HTTP.

js// services/userService.js const userRepository = require('../repositories/userRepository'); const { ValidationError } = require('../errors/customErrors'); const updateProfile = async (userId, updateData) => { // Pure JavaScript — no req, res, next, no HTTP context // Step 1: Business validation if (updateData.bio && updateData.bio.length > 500) { throw new ValidationError('Bio cannot exceed 500 characters'); } if (updateData.avatar) { // Validate URL format or check upload permissions const isValidUrl = /^https?:\/\/.+\..+/.test(updateData.avatar); if (!isValidUrl) { throw new ValidationError('Avatar must be a valid HTTPS URL'); } } // Step 2: Call repository (no DB queries here, repository handles that) const user = await userRepository.findById(userId); if (!user) { throw new NotFoundError(`User with ID ${userId} not found`); } // Step 3: Prepare clean data and persist const updatedUser = await userRepository.update(userId, { bio: updateData.bio || user.bio, avatar: updateData.avatar || user.avatar, updatedAt: new Date() }); return updatedUser; }; module.exports = { updateProfile };

Why Services Are HTTP-Agnostic

Notice: no reqresnext

js// Scenario 1: API request // router → controller → service // Scenario 2: CLI command const service = require('./services/userService'); await service.updateProfile('user123', { bio: 'Updated from CLI' }); // Scenario 3: Background job (Cron) const cron = require('node-cron'); cron.schedule('0 0 * * *', async () => { await service.updateProfile('user123', { bio: 'Updated from background job' }); }); // Scenario 4: Message queue (RabbitMQ, Kafka) consumer.on('message', async (msg) => { const { userId, data } = JSON.parse(msg); await service.updateProfile(userId, data); });

Same service logic. Different interfaces. This is the power of HTTP-agnostic architecture.

Layer 4: The Repository (Data Access Abstraction)

The repository is a protective airgap between business logic and database technology.

js// repositories/userRepository.js const User = require('../models/User'); const { NotFoundError, DatabaseError } = require('../errors/customErrors'); class UserRepository { async findById(id) { // Only repository touches Mongoose const user = await User.findOne({ _id: id, deletedAt: null }); // Repository enforces explicit error handling if (!user) { throw new NotFoundError(`User ${id} not found`); } // Map Mongoose doc to plain object return this._serialize(user); } async update(id, data) { try { const user = await User.findByIdAndUpdate( id, { ...data, syncVersion: (await User.findById(id)).syncVersion + 1 }, { new: true, runValidators: true } ); if (!user) { throw new NotFoundError(`User ${id} not found`); } return this._serialize(user); } catch (err) { if (err instanceof NotFoundError) throw err; throw new DatabaseError(`Failed to update user: ${err.message}`); } } // Helper to strip sensitive fields and map _id → id _serialize(doc) { const obj = doc.toObject(); const { password, __v, ...clean } = obj; clean.id = clean._id; return clean; } } module.exports = new UserRepository();

The Repository Contract

- Encapsulate all database operations: Service never touches Mongoose

- Throw custom errors: Not null/undefined — throw

NotFoundError - Serialize responses: Strip sensitive fields, map to

_idid - Handle soft deletes: Always filter

{ deletedAt: null }

The Interchangeable Airgap

This is the killer feature. If your team decides to swap MongoDB for PostgreSQL:

js// Only this layer changes. Service & controller remain identical. // New PostgreSQL implementation class UserRepository { async findById(id) { const user = await db.query( 'SELECT * FROM users WHERE id = $1 AND deleted_at IS NULL', [id] ); if (!user) throw new NotFoundError(`User ${id} not found`); return user; } // ... rest of methods }

The service has zero idea you swapped databases.

Layer 5: The Model (Schema Definition)

Models define both how data is validated (Zod) and how it's stored (Mongoose).

js// schemas/userSchemas.js const { z } = require('zod'); // Zod schema: Validates incoming API requests const updateProfileSchema = z.object({ bio: z.string().max(500).optional(), avatar: z.string().url().optional(), // Other fields are rejected (strict validation) }).strict(); module.exports = { updateProfileSchema };

js// models/User.js const mongoose = require('mongoose'); // Mongoose schema: Defines database storage, indexes, and type safety const userSchema = new mongoose.Schema({ _id: mongoose.Schema.Types.ObjectId, bio: { type: String, maxlength: 500, default: null }, avatar: { type: String, validate: /^https?:\/\/.+/, default: null }, email: { type: String, required: true, unique: true, lowercase: true, index: true }, password: { type: String, required: true, select: false // Never returned in queries }, deletedAt: { type: Date, default: null, index: true }, syncVersion: { type: Number, default: 0 } }, { timestamps: true }); // Ensure repository always filters soft-deleted records userSchema.pre('find', function() { this.where({ deletedAt: null }); }); module.exports = mongoose.model('User', userSchema);

Why Dual Schemas

- Zod (API layer): JSON-friendly, type-safe, blocks extra fields

- Mongoose (DB layer): Indexes, type coercion, field selection, soft deletes

Having both guarantees safety at both ends of the application.

Error Handling: The Glue That Holds It Together

When any layer throws an error, a centralized error handler catches it and formats a standardized response.

js// errors/customErrors.js class BaseError extends Error { constructor(message, statusCode, internalCode) { super(message); this.statusCode = statusCode; this.internalCode = internalCode; } } class NotFoundError extends BaseError { constructor(message) { super(message, 404, 'NOT_FOUND'); } } class ValidationError extends BaseError { constructor(message) { super(message, 400, 'VALIDATION_ERROR'); } } class UnauthorizedError extends BaseError { constructor(message = 'Unauthorized') { super(message, 401, 'UNAUTHORIZED'); } } module.exports = { BaseError, NotFoundError, ValidationError, UnauthorizedError };

js// middleware/errorHandler.js const errorHandler = (err, req, res, next) => { const statusCode = err.statusCode || 500; const internalCode = err.internalCode || 'INTERNAL_SERVER_ERROR'; // Standardized error response res.status(statusCode).json({ success: false, error: { message: err.message, code: internalCode, timestamp: new Date().toISOString() } }); // Log to external service (Sentry, DataDog, etc.) console.error(`[${internalCode}]`, err.message); }; module.exports = errorHandler;

js// app.js const express = require('express'); const errorHandler = require('./middleware/errorHandler'); const userRoutes = require('./routes/userRoutes'); const app = express(); // Routes FIRST app.use('/api', userRoutes); // Error handler LAST (catches all errors from routes & middleware above) app.use(errorHandler);

Error Flow in Action

- Repository throws

NotFoundError('User 123 not found') - Service doesn't catch it, propagates upward

- Controller block calls

catch(err)next(err) - Error handler middleware formats response:

{ success: false, error: { message: '...', code: 'NOT_FOUND' } } - Client receives standardized 404 JSON

Every error in the system follows this path. Consistency guaranteed.

Tracing a Complete Request

Let's trace the same PATCH request through all five layers, start to finish.

Request arrives:

PATCH /api/users/user123{ bio: "Updated", avatar: "https://..." }Step 1: Ingress Firewall (Router + Middleware)

- authenticateJWT middleware runs ✓ Verifies JWT token ✓ Attaches { user: { id: 'admin1', role: 'admin' } } to req - rateLimitPerEndpoint middleware runs ✓ Checks request count from this IP ✓ Allows 5 requests per 15 minutes ✓ ✓ This is request #2 of 5 — allowed - validateZodSchema middleware runs ✓ Validates { bio, avatar } against Zod schema ✓ Checks bio.length ≤ 500 ✓ Checks avatar is valid URL ✓ Payload is CLEAN — request proceeds

Step 2: Controller

js// userController.updateProfile(req, res, next) { userId = 'user123' // from req.params.id updateData = { bio: "Updated", avatar: "https://..." // Already validated by middleware } requestingUser = { // From auth middleware id: 'admin1', role: 'admin' } // Call service updatedUser = await userService.updateProfile('user123', updateData) // Format response res.status(200).json({ success: true, data: updatedUser }) }

Step 3: Service (Business Logic)

js// userService.updateProfile(userId, updateData) { // Business validation (second validation layer) if (updateData.bio.length > 500) { throw new ValidationError('...') // Never happens; Zod already checked } // Repository call user = await userRepository.findById('user123') // Throws NotFoundError if missing // Update user updatedUser = await userRepository.update('user123', { bio: updateData.bio, avatar: updateData.avatar, updatedAt: new Date() }) return updatedUser }

Step 4: Repository (Database)

js// userRepository.update(userId, data) { // Mongoose query user = await User.findByIdAndUpdate( 'user123', { bio: 'Updated', avatar: 'https://...', syncVersion: 2, updatedAt: new Date() }, { new: true, runValidators: true } ) // Mongoose finds document, updates fields, saves to MongoDB // Serialize response return { id: user._id, bio: 'Updated', avatar: 'https://...', email: 'user@example.com', // password EXCLUDED (select: false in schema) timestamps: { createdAt: '...', updatedAt: '...' } } }

Step 5: Back Up the Stack

Service returns { id, bio, avatar, ... } ↑ Controller wraps in { success: true, data: { ... } } ↑ HTTP response sent to client: 200 OK with JSON

Total time: ~20ms. Everything happened cleanly. Each layer did one job.

Common Mistakes: Violating the Boundaries

Mistake 1: Service Imports Database Model

js// ❌ WRONG const User = require('../models/User'); const updateProfile = async (userId, data) => { const user = await User.findById(userId); // Service touches MongoDB user.bio = data.bio; await user.save(); return user; };

Why it's bad: Service now depends on Mongoose. Can't reuse from CLI. Hard to test.

Fix: Service calls repository. Repository touches database.

Mistake 2: Controller Has Business Logic

js// ❌ WRONG app.patch('/users/:id', async (req, res) => { if (req.body.bio.length > 500) { // Business rule in controller return res.status(400).json({ error: 'Bio too long' }); } // ... });

Why it's bad: Same bio validation in 3 different controllers.

Fix: Move to service. Middleware validates format (Zod). Service validates business rules.

Mistake 3: No Validation at Ingress

js// ❌ WRONG app.patch('/users/:id', async (req, res) => { // No middleware validation // Service must check req.body.bio exists // Service must check it's a string // Service must check it's ≤ 500 chars });

Why it's bad: Inner layers bloated with defensive code.

Fix: Zod middleware validates shape/type. Service validates business rules. Controller assumes clean data.

Mistake 4: Repository Returns Mongoose Documents

js// ❌ WRONG const findById = async (id) => { return await User.findById(id); // Returns Mongoose document with methods };

Why it's bad: Service receives objects with

.save().toObject()Fix: Always serialize. Return plain objects.

Why This Matters: The SOLID Principles Foundation

This architecture is the practical implementation of SOLID principles in JavaScript:

| Principle | How Layers Enforce It |

|---|---|

| Single Responsibility | Router handles HTTP. Controller translates. Service implements logic. Repository accesses DB. Model defines structure. |

| Open/Closed | Add new services without changing existing code. Routes open for extension; controllers closed for modification. |

| Liskov Substitution | Swap MongoDB for PostgreSQL by replacing repository. Service doesn't care which database backs it. |

| Interface Segregation | Service has minimal interface: just functions. Repository has minimal interface: just CRUD. |

| Dependency Inversion | Services depend on repository abstraction, not concrete Mongoose queries. |

When your codebase follows SOLID principles, it becomes resilient to change and easy to scale.

From Monolith to Enterprise Machine

Compare two backend architectures at the moment they need to scale:

Monolithic Approach (No Boundaries)

- Engineer adds feature to controller

- Engineer queries database directly in controller

- Next engineer adds business logic to middleware

- Third engineer duplicates profile update logic in another endpoint

- Now you have 3 copies of the same logic with different validation

- Bugs appear in one endpoint but not others

- Swapping MongoDB becomes a 6-month rewrite

- Onboarding new developers takes weeks

Layered Approach (Strict Boundaries)

- Engineer creates service function

- Engineer creates repository method

- Feature is added in 2 files

- Same logic reused everywhere (DRY principle)

- Bug fix in service fixes all endpoints

- Swapping MongoDB takes 1 week (repository rewrite only)

- Onboarding new developers takes days (architecture is predictable)

- Team can safely hire 10 more engineers without chaos

Scale is enforced through structure, not discipline.

Practical Tips for Implementation

Start Simple, Extend Gradually

Don't over-engineer from day one. Start with a basic service and repository, then add patterns as complexity grows.

Use Classes or Closures Consistently

js// Classes work well for repositories (stateless singleton instances) class UserRepository { /* ... */ } // Functions work well for services (pure functions called many ways) const updateProfile = async (userId, data) => { /* ... */ }

Test at Each Layer

- Unit test services: Inject mock repository, verify business logic

- Integration test repositories: Use real or in-memory database

- E2E test controllers: Full request/response cycle

Use TypeScript (Optional but Powerful)

ts// Type safety across layers prevents mistakes type UpdateProfileInput = z.infer<typeof updateProfileSchema>; type UserOutput = ReturnType<typeof userRepository.findById>;

Monitoring & Observability

Each layer can emit structured logs:

jslogger.info('route.updateProfile.called', { userId, method: 'PATCH' }); logger.info('service.updateProfile.validating', { bioLength: data.bio.length }); logger.info('repository.update.querying', { updateFields: Object.keys(data) });

Final Thoughts

Express.js doesn't fail because it's unopinionated. It fails because teams don't enforce opinions. Without architectural boundaries, applications degrade into unmaintainable spaghetti.

Multi-layered architecture is the antidote. It's not new — it's been proven by every major tech company building Node.js systems at scale. By ruthlessly isolating HTTP routing, business logic, and database operations, you prevent the technical debt that stalls projects.

This isn't about writing more code. It's about writing code in a predictable, testable, scalable way. Once you internalize the five layers, building features becomes mechanical. You know exactly where each piece belongs. You can onboard developers in days instead of weeks. You can refactor without fear.

The effort to adopt this pattern pays dividends the moment your team grows beyond one engineer or your codebase grows beyond a few thousand lines.

Mastering these boundaries transforms you from a developer who writes endpoints into an architect who builds systems.

Further Reading: